Chen-Hsuan Lin

Chen-Hsuan is the first name (neither just Chen nor Hsuan).

Hsuan is pronounced like "shoo-en" with a quick transition.

Research Scientist & Manager @ NVIDIA

I am a research scientist and manager in NVIDIA Cosmos Lab.

We are building NVIDIA Cosmos world models to advance physical AI, enabling machines to perceive, reason about, simulate, and interact with the real world.

My research focuses on modeling 3D structures, dynamics, and physics of the visual world, recognized as one of TIME Magazine's Best Inventions of 2023.

I received my Ph.D. in Robotics from Carnegie Mellon University, where I was supported by the NVIDIA Graduate Fellowship.

Before that, I received my B.S. from National Taiwan University.

Updates

Highlights

Research

Cosmos 3 is an open family of omnimodal world models that jointly process and generate language, images, video, audio, and action sequences. Its unified Mixture-of-Transformers architecture connects physical AI reasoning, world generation, simulation, and action modeling in one framework.

Coverage:

NVIDIA |

Axios |

The Decoder |

TechStartups |

GIGAZINE

Plenoptic Video Generation

CVPR 2026

paper •

project page •

BibTex

@inproceedings{fu2026plenoptic,

title={Plenoptic Video Generation},

author={Fu, Xiao and Tang, Shitao and Shi, Min and Liu, Xian and Gu, Jinwei and Liu, Ming-Yu and Lin, Dahua and Lin, Chen-Hsuan},

booktitle={IEEE Conference on Computer Vision and Pattern Recognition ({CVPR})},

year={2026}

}

title={Plenoptic Video Generation},

author={Fu, Xiao and Tang, Shitao and Shi, Min and Liu, Xian and Gu, Jinwei and Liu, Ming-Yu and Lin, Dahua and Lin, Chen-Hsuan},

booktitle={IEEE Conference on Computer Vision and Pattern Recognition ({CVPR})},

year={2026}

}

We tackle multi-view coherence in video re-rendering via autoregressive generation with camera-guided retrieval and self-conditioning. Retrieved observations synchronize appearance across viewpoints while ensuring temporal consistency. Our approach achieves state-of-the-art results on various benchmarks.

DreamDojo: A Generalist Robot World Model from Large-Scale Human Videos

ICML 2026 (spotlight presentation)

paper •

project page •

code •

BibTex

@inproceedings{gao2026dreamdojo,

title={DreamDojo: A Generalist Robot World Model from Large-Scale Human Videos},

author={Gao, Shenyuan and Liang, William and Zheng, Kaiyuan and Malik, Ayaan and Ye, Seonghyeon and Yu, Sihyun and Tseng, Wei-Cheng and Dong, Yuzhu and Mo, Kaichun and Lin, Chen-Hsuan and Ma, Qianli and Nah, Seungjun and Magne, Loic and Xiang, Jiannan and Xie, Yuqi and Zheng, Ruijie and Niu, Dantong and Tan, You Liang and Zentner, K.R. and Kurian, George and Indupuru, Suneel and Jannaty, Pooya and Gu, Jinwei and Zhang, Jun and Malik, Jitendra and Abbeel, Pieter and Liu, Ming-Yu and Zhu, Yuke and Jang, Joel and Fan, Linxi},

booktitle={International Conference on Machine Learning (ICML)},

year={2026}

}

title={DreamDojo: A Generalist Robot World Model from Large-Scale Human Videos},

author={Gao, Shenyuan and Liang, William and Zheng, Kaiyuan and Malik, Ayaan and Ye, Seonghyeon and Yu, Sihyun and Tseng, Wei-Cheng and Dong, Yuzhu and Mo, Kaichun and Lin, Chen-Hsuan and Ma, Qianli and Nah, Seungjun and Magne, Loic and Xiang, Jiannan and Xie, Yuqi and Zheng, Ruijie and Niu, Dantong and Tan, You Liang and Zentner, K.R. and Kurian, George and Indupuru, Suneel and Jannaty, Pooya and Gu, Jinwei and Zhang, Jun and Malik, Jitendra and Abbeel, Pieter and Liu, Ming-Yu and Zhu, Yuke and Jang, Joel and Fan, Linxi},

booktitle={International Conference on Machine Learning (ICML)},

year={2026}

}

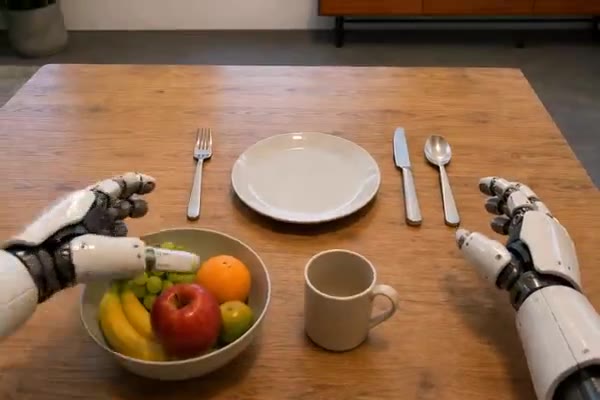

DreamDojo trains a generalist robot world model on 44,000+ hours of egocentric human video. Continuous latent actions bridge scarce robot action labels via knowledge transfer from unlabeled footage, enabling the model to support teleoperation, policy evaluation, and model-based planning.

World Simulation with Video Foundation Models for Physical AI

Technical report 2025

paper •

project page (predict2.5, transfer2.5) •

code (predict2.5 , transfer2.5 ) •

BibTex

@article{ali2025worldsimulation,

title={World Simulation with Video Foundation Models for Physical AI},

author={Ali, Arslan and Bai, Junjie and Bala, Maciej and Balaji, Yogesh and Blakeman, Aaron and Cai, Tiffany and Cao, Jiaxin and Cao, Tianshi and Cha, Elizabeth and Chao, Yu-Wei and Chattopadhyay, Prithvijit and Chen, Mike and Chen, Yongxin and Chen, Yu and Cheng, Shuai and Cui, Yin and Diamond, Jenna and Ding, Yifan and Fan, Jiaojiao and Fan, Linxi and Feng, Liang and Ferroni, Francesco and Fidler, Sanja and Fu, Xiao and Gao, Ruiyuan and Ge, Yunhao and Gu, Jinwei and Gupta, Aryaman and Gururani, Siddharth and El Hanafi, Imad and Hassani, Ali and Hao, Zekun and Huffman, Jacob and Jang, Joel and Jannaty, Pooya and Kautz, Jan and Lam, Grace and Li, Xuan and Li, Zhaoshuo and Liao, Maosheng and Lin, Chen-Hsuan and Lin, Tsung-Yi and Lin, Yen-Chen and Ling, Huan and Liu, Ming-Yu and Liu, Xian and Lu, Yifan and Luo, Alice and Ma, Qianli and Mao, Hanzi and Mo, Kaichun and Nah, Seungjun and Narang, Yashraj and Panaskar, Abhijeet and Pavao, Lindsey and Pham, Trung and Ramezanali, Morteza and Reda, Fitsum and Reed, Scott and Ren, Xuanchi and Shao, Haonan and Shen, Yue and Shi, Stella and Song, Shuran and Stefaniak, Bartosz and Sun, Shangkun and Tang, Shitao and Tasmeen, Sameena and Tchapmi, Lyne and Tseng, Wei-Cheng and Varghese, Jibin and Wang, Andrew Z. and Wang, Hao and Wang, Haoxiang and Wang, Heng and Wang, Ting-Chun and Wei, Fangyin and Xu, Jiashu and Yang, Dinghao and Yang, Xiaodong and Ye, Haotian and Ye, Seonghyeon and Zeng, Xiaohui and Zhang, Jing and Zhang, Qinsheng and Zheng, Kaiwen and Zhu, Andrew and Zhu, Yuke},

journal={arXiv preprint arXiv:2511.00062},

year={2025}

}

title={World Simulation with Video Foundation Models for Physical AI},

author={Ali, Arslan and Bai, Junjie and Bala, Maciej and Balaji, Yogesh and Blakeman, Aaron and Cai, Tiffany and Cao, Jiaxin and Cao, Tianshi and Cha, Elizabeth and Chao, Yu-Wei and Chattopadhyay, Prithvijit and Chen, Mike and Chen, Yongxin and Chen, Yu and Cheng, Shuai and Cui, Yin and Diamond, Jenna and Ding, Yifan and Fan, Jiaojiao and Fan, Linxi and Feng, Liang and Ferroni, Francesco and Fidler, Sanja and Fu, Xiao and Gao, Ruiyuan and Ge, Yunhao and Gu, Jinwei and Gupta, Aryaman and Gururani, Siddharth and El Hanafi, Imad and Hassani, Ali and Hao, Zekun and Huffman, Jacob and Jang, Joel and Jannaty, Pooya and Kautz, Jan and Lam, Grace and Li, Xuan and Li, Zhaoshuo and Liao, Maosheng and Lin, Chen-Hsuan and Lin, Tsung-Yi and Lin, Yen-Chen and Ling, Huan and Liu, Ming-Yu and Liu, Xian and Lu, Yifan and Luo, Alice and Ma, Qianli and Mao, Hanzi and Mo, Kaichun and Nah, Seungjun and Narang, Yashraj and Panaskar, Abhijeet and Pavao, Lindsey and Pham, Trung and Ramezanali, Morteza and Reda, Fitsum and Reed, Scott and Ren, Xuanchi and Shao, Haonan and Shen, Yue and Shi, Stella and Song, Shuran and Stefaniak, Bartosz and Sun, Shangkun and Tang, Shitao and Tasmeen, Sameena and Tchapmi, Lyne and Tseng, Wei-Cheng and Varghese, Jibin and Wang, Andrew Z. and Wang, Hao and Wang, Haoxiang and Wang, Heng and Wang, Ting-Chun and Wei, Fangyin and Xu, Jiashu and Yang, Dinghao and Yang, Xiaodong and Ye, Haotian and Ye, Seonghyeon and Zeng, Xiaohui and Zhang, Jing and Zhang, Qinsheng and Zheng, Kaiwen and Zhu, Andrew and Zhu, Yuke},

journal={arXiv preprint arXiv:2511.00062},

year={2025}

}

Cosmos-Predict2.5 is a flow-based world foundation model unifying text-, image-, and video-conditioned generation at 2B/14B scales with RL refinement. Cosmos-Transfer2.5 converts structured inputs — segmentation, depth, edge maps — into high-fidelity video for simulation and data generation.

ViPE: Video Pose Engine for 3D Geometric Perception

Technical report 2025

paper •

project page •

BibTex

@article{huang2025vipe,

title={ViPE: Video Pose Engine for 3D Geometric Perception},

author={Huang, Jiahui and Zhou, Qunjie and Rabeti, Hesam and Korovko, Aleksandr and Ling, Huan and Ren, Xuanchi and Shen, Tianchang and Gao, Jun and Slepichev, Dmitry and Lin, Chen-Hsuan and Ren, Jiawei and Xie, Kevin and Biswas, Joydeep and Leal-Taixe, Laura and Fidler, Sanja},

journal={arXiv preprint arXiv:2508.10934},

year={2025}

}

title={ViPE: Video Pose Engine for 3D Geometric Perception},

author={Huang, Jiahui and Zhou, Qunjie and Rabeti, Hesam and Korovko, Aleksandr and Ling, Huan and Ren, Xuanchi and Shen, Tianchang and Gao, Jun and Slepichev, Dmitry and Lin, Chen-Hsuan and Ren, Jiawei and Xie, Kevin and Biswas, Joydeep and Leal-Taixe, Laura and Fidler, Sanja},

journal={arXiv preprint arXiv:2508.10934},

year={2025}

}

ViPE recovers camera intrinsics, per-frame motion, and dense depth from unconstrained in-the-wild videos without any known camera parameters. It handles diverse footage from selfies to dashcam recordings and scales to auto-annotate large collections. We also release a dataset of ~96M annotated frames.

Scenethesis: A Language and Vision Agentic Framework for 3D Scene Generation

ICLR 2026

paper •

project page •

BibTex

@inproceedings{ling2026scenethesis,

title={Scenethesis: A Language and Vision Agentic Framework for 3D Scene Generation},

author={Ling, Lu and Lin, Chen-Hsuan and Lin, Tsung-Yi and Ding, Yifan and Zeng, Yu and Sheng, Yichen and Ge, Yunhao and Liu, Ming-Yu and Bera, Aniket and Li, Zhaoshuo},

booktitle={International Conference on Learning Representations ({ICLR})},

year={2026}

}

title={Scenethesis: A Language and Vision Agentic Framework for 3D Scene Generation},

author={Ling, Lu and Lin, Chen-Hsuan and Lin, Tsung-Yi and Ding, Yifan and Zeng, Yu and Sheng, Yichen and Ge, Yunhao and Liu, Ming-Yu and Bera, Aniket and Li, Zhaoshuo},

booktitle={International Conference on Learning Representations ({ICLR})},

year={2026}

}

Scenethesis generates realistic 3D scenes from text without any task-specific training. An LLM drafts a coarse layout, vision modules generate image guidance and extract inter-object relations, and an optimization step enforces physical plausibility. The result is diverse, fully editable 3D scene arrangements.

Dynamic Camera Poses and Where to Find Them

CVPR 2025

paper •

project page •

dataset •

BibTex

@inproceedings{rockwell2025dynamic,

title={Dynamic Camera Poses and Where to Find Them},

author={Rockwell, Chris and Tung, Joseph and Lin, Tsung-Yi and Liu, Ming-Yu and Fouhey, David F. and Lin, Chen-Hsuan},

booktitle={IEEE Conference on Computer Vision and Pattern Recognition ({CVPR})},

year={2025}

}

title={Dynamic Camera Poses and Where to Find Them},

author={Rockwell, Chris and Tung, Joseph and Lin, Tsung-Yi and Liu, Ming-Yu and Fouhey, David F. and Lin, Chen-Hsuan},

booktitle={IEEE Conference on Computer Vision and Pattern Recognition ({CVPR})},

year={2025}

}

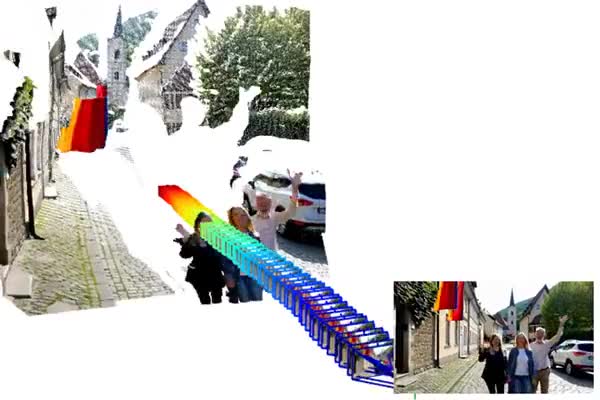

DynPose-100K is a large-scale dataset of dynamic internet videos annotated with camera poses. The pipeline uses task-specific and generalist models for filtering, then point tracking, dynamic masking, and structure-from-motion for accurate pose estimation across diverse real-world scenes.

Cosmos World Foundation Model Platform for Physical AI

Best AI + Best overall of CES 2025 Technical report 2025

paper •

website •

video •

project page •

code •

BibTex

@article{nvidia2025cosmos,

title={Cosmos World Foundation Model Platform for Physical AI},

author={Agarwal, Niket and Ali, Arslan and Bala, Maciej and Balaji, Yogesh and Barker, Erik and Cai, Tiffany and Chattopadhyay, Prithvijit and Chen, Yongxin and Cui, Yin and Ding, Yifan and Dworakowski, Daniel and Fan, Jiaojiao and Fenzi, Michele and Ferroni, Francesco and Fidler, Sanja and Fox, Dieter and Ge, Songwei and Ge, Yunhao and Gu, Jinwei and Gururani, Siddharth and He, Ethan and Huang, Jiahui and Huffman, Jacob and Jannaty, Pooya and Jin, Jingyi and Kim, Seung Wook and Klár, Gergely and Lam, Grace and Lan, Shiyi and Leal-Taixe, Laura and Li, Anqi and Li, Zhaoshuo and Lin, Chen-Hsuan and Lin, Tsung-Yi and Ling, Huan and Liu, Ming-Yu and Liu, Xian and Luo, Alice and Ma, Qianli and Mao, Hanzi and Mo, Kaichun and Mousavian, Arsalan and Nah, Seungjun and Niverty, Sriharsha and Page, David and Paschalidou, Despoina and Patel, Zeeshan and Pavao, Lindsey and Ramezanali, Morteza and Reda, Fitsum and Ren, Xiaowei and Sabavat, Vasanth Rao Naik and Schmerling, Ed and Shi, Stella and Stefaniak, Bartosz and Tang, Shitao and Tchapmi, Lyne and Tredak, Przemek and Tseng, Wei-Cheng and Varghese, Jibin and Wang, Hao and Wang, Haoxiang and Wang, Heng and Wang, Ting-Chun and Wei, Fangyin and Wei, Xinyue and Wu, Jay Zhangjie and Xu, Jiashu and Yang, Wei and Yen-Chen, Lin and Zeng, Xiaohui and Zeng, Yu and Zhang, Jing and Zhang, Qinsheng and Zhang, Yuxuan and Zhao, Qingqing and Zolkowski, Artur},

journal={arXiv preprint arXiv:2501.03575},

year={2025}

}

title={Cosmos World Foundation Model Platform for Physical AI},

author={Agarwal, Niket and Ali, Arslan and Bala, Maciej and Balaji, Yogesh and Barker, Erik and Cai, Tiffany and Chattopadhyay, Prithvijit and Chen, Yongxin and Cui, Yin and Ding, Yifan and Dworakowski, Daniel and Fan, Jiaojiao and Fenzi, Michele and Ferroni, Francesco and Fidler, Sanja and Fox, Dieter and Ge, Songwei and Ge, Yunhao and Gu, Jinwei and Gururani, Siddharth and He, Ethan and Huang, Jiahui and Huffman, Jacob and Jannaty, Pooya and Jin, Jingyi and Kim, Seung Wook and Klár, Gergely and Lam, Grace and Lan, Shiyi and Leal-Taixe, Laura and Li, Anqi and Li, Zhaoshuo and Lin, Chen-Hsuan and Lin, Tsung-Yi and Ling, Huan and Liu, Ming-Yu and Liu, Xian and Luo, Alice and Ma, Qianli and Mao, Hanzi and Mo, Kaichun and Mousavian, Arsalan and Nah, Seungjun and Niverty, Sriharsha and Page, David and Paschalidou, Despoina and Patel, Zeeshan and Pavao, Lindsey and Ramezanali, Morteza and Reda, Fitsum and Ren, Xiaowei and Sabavat, Vasanth Rao Naik and Schmerling, Ed and Shi, Stella and Stefaniak, Bartosz and Tang, Shitao and Tchapmi, Lyne and Tredak, Przemek and Tseng, Wei-Cheng and Varghese, Jibin and Wang, Hao and Wang, Haoxiang and Wang, Heng and Wang, Ting-Chun and Wei, Fangyin and Wei, Xinyue and Wu, Jay Zhangjie and Xu, Jiashu and Yang, Wei and Yen-Chen, Lin and Zeng, Xiaohui and Zeng, Yu and Zhang, Jing and Zhang, Qinsheng and Zhang, Yuxuan and Zhao, Qingqing and Zolkowski, Artur},

journal={arXiv preprint arXiv:2501.03575},

year={2025}

}

Cosmos is an open-source world foundation model platform for physical AI. It provides pre-trained world models, video tokenizers, post-training recipes, and a video curation pipeline — a comprehensive toolkit for the robotics and autonomous vehicle communities to build specialized world models.

Coverage:

NVIDIA (news and blog) |

TechCrunch |

VentureBeat |

WIRED |

Fortune |

Reuters |

Business Insider |

BBC |

Forbes (news, news and news) |

New York Times |

Wall Street Journal |

MIT Technology Review |

Newsweek |

Bloomberg |

Financial Times |

Two Minute Papers

Edify 3D: Scalable High-Quality 3D Asset Generation

Technical report 2024

paper •

project page •

BibTex

@article{nvidia2024edify3d,

title={Edify 3D: Scalable High-Quality 3D Asset Generation},

author={NVIDIA and Bala, Maciej and Cui, Yin and Ding, Yifan and Ge, Yunhao and Hao, Zekun and Hasselgren, Jon and Huffman, Jacob and Jin, Jingyi and Lewis, J.P. and Li, Zhaoshuo and Lin, Chen-Hsuan and Lin, Yen-Chen and Lin, Tsung-Yi and Liu, Ming-Yu and Luo, Alice and Ma, Qianli and Munkberg, Jacob and Shi, Stella and Wei, Fangyin and Xiang, Donglai and Xu, Jiashu and Zeng, Xiaohui and Zhang, Qinsheng},

journal={arXiv preprint arXiv:2411.07135},

year={2024}

}

title={Edify 3D: Scalable High-Quality 3D Asset Generation},

author={NVIDIA and Bala, Maciej and Cui, Yin and Ding, Yifan and Ge, Yunhao and Hao, Zekun and Hasselgren, Jon and Huffman, Jacob and Jin, Jingyi and Lewis, J.P. and Li, Zhaoshuo and Lin, Chen-Hsuan and Lin, Yen-Chen and Lin, Tsung-Yi and Liu, Ming-Yu and Luo, Alice and Ma, Qianli and Munkberg, Jacob and Shi, Stella and Wei, Fangyin and Xiang, Donglai and Xu, Jiashu and Zeng, Xiaohui and Zhang, Qinsheng},

journal={arXiv preprint arXiv:2411.07135},

year={2024}

}

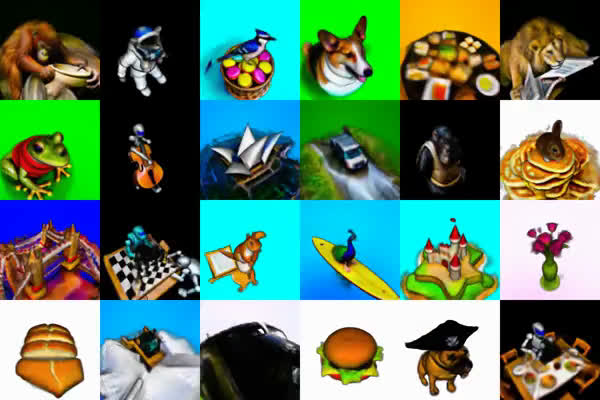

Edify 3D enables scalable high-quality 3D asset generation from text or image inputs. It synthesizes consistent multi-view RGB and surface normals via a diffusion model, then lifts them to 3D shape, high-resolution textures, and PBR materials — delivering a production-ready asset in under 2 minutes.

Coverage:

NVIDIA (blog and blog) |

Forbes |

VentureBeat (news and news) |

Two Minute Papers |

fxguide |

Animation World Network

GenUSD: 3D Scene Generation Made Easy

SIGGRAPH 2024 Real-Time Live!

paper •

presentation •

BibTex

@inproceedings{lin2024genusd,

title={GenUSD: 3D Scene Generation Made Easy},

author={Lin, Tsung-Yi and Lin, Chen-Hsuan and Cui, Yin and Ge, Yunhao and Nah, Seungjun and Mallya, Arun and Hao, Zekun and Ding, Yifan and Mao, Hanzi and Li, Zhaoshuo and Lin, Yen-Chen and Zeng, Xiaohui and Zhang, Qinsheng and Xiang, Donglai and Ma, Qianli and Lewis, J.P. and Jin, Jingyi and Jannaty, Pooya and Liu, Ming-Yu},

booktitle={ACM SIGGRAPH 2024 Real-Time Live!},

year={2024}

}

title={GenUSD: 3D Scene Generation Made Easy},

author={Lin, Tsung-Yi and Lin, Chen-Hsuan and Cui, Yin and Ge, Yunhao and Nah, Seungjun and Mallya, Arun and Hao, Zekun and Ding, Yifan and Mao, Hanzi and Li, Zhaoshuo and Lin, Yen-Chen and Zeng, Xiaohui and Zhang, Qinsheng and Xiang, Donglai and Ma, Qianli and Lewis, J.P. and Jin, Jingyi and Jannaty, Pooya and Liu, Ming-Yu},

booktitle={ACM SIGGRAPH 2024 Real-Time Live!},

year={2024}

}

GenUSD transforms natural language prompts into realistic, fully editable 3D scenes in USD (Universal Scene Description) format. It combines an LLM for high-level scene layout planning with Edify 3D for high-quality asset generation, as demonstrated live at SIGGRAPH 2024 Real-Time Live!

Coverage:

NVIDIA

ATT3D: Amortized Text-to-3D Object Synthesis

ICCV 2023

paper •

project page •

BibTex

@inproceedings{lorraine2023att3d,

title={ATT3D: Amortized Text-to-3D Object Synthesis},

author={Lorraine, Jonathan and Xie, Kevin and Zeng, Xiaohui and Lin, Chen-Hsuan and Takikawa, Towaki and Sharp, Nicholas and Lin, Tsung-Yi and Liu, Ming-Yu and Fidler, Sanja and Lucas, James},

booktitle={IEEE International Conference on Computer Vision ({ICCV})},

year={2023}

}

title={ATT3D: Amortized Text-to-3D Object Synthesis},

author={Lorraine, Jonathan and Xie, Kevin and Zeng, Xiaohui and Lin, Chen-Hsuan and Takikawa, Towaki and Sharp, Nicholas and Lin, Tsung-Yi and Liu, Ming-Yu and Fidler, Sanja and Lucas, James},

booktitle={IEEE International Conference on Computer Vision ({ICCV})},

year={2023}

}

Generating high-quality 3D assets from text typically requires lengthy per-prompt optimization. We instead train a unified model that amortizes this across many prompts, sharing computation and enabling knowledge transfer — unlocking smooth interpolation between text-described 3D shapes.

Neuralangelo: High-Fidelity Neural Surface Reconstruction

TIME's Best Inventions of 2023 CVPR 2023

paper •

project page •

code •

BibTex

@inproceedings{li2023neuralangelo,

title={Neuralangelo: High-Fidelity Neural Surface Reconstruction},

author={Li, Zhaoshuo and M\"uller, Thomas and Evans, Alex and Taylor, Russell H and Unberath, Mathias and Liu, Ming-Yu and Lin, Chen-Hsuan},

booktitle={IEEE Conference on Computer Vision and Pattern Recognition ({CVPR})},

year={2023}

}

title={Neuralangelo: High-Fidelity Neural Surface Reconstruction},

author={Li, Zhaoshuo and M\"uller, Thomas and Evans, Alex and Taylor, Russell H and Unberath, Mathias and Liu, Ming-Yu and Lin, Chen-Hsuan},

booktitle={IEEE Conference on Computer Vision and Pattern Recognition ({CVPR})},

year={2023}

}

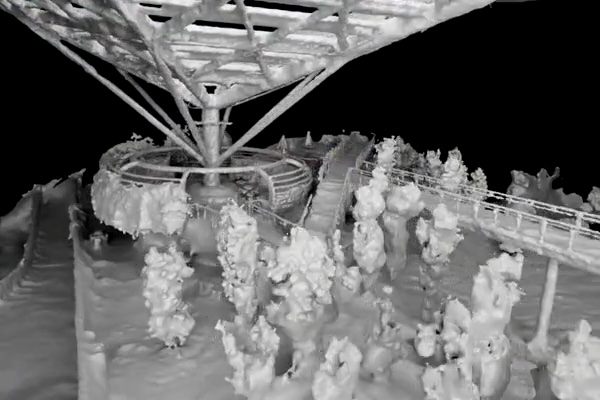

We achieve high-fidelity 3D surface reconstruction from RGB video — named a TIME Best Invention of 2023. Multi-resolution hash grids with numerical gradients and a coarse-to-fine optimization strategy recover fine-grained geometric details of large-scale scenes at unprecedented fidelity.

Coverage:

NVIDIA |

TIME (Best Inventions) |

VentureBeat |

The Verge |

Engadget |

WIRED |

BBC Science Focus |

Yahoo! News |

CG Channel |

Fast Company |

Two Minute Papers (video and video) |

fxguide |

Computerworld |

PetaPixel |

MarkTechPost (blog and blog) |

Creative Bloq

Magic3D: High-Resolution Text-to-3D Content Creation

CVPR 2023 (highlight)

paper •

project page •

BibTex

@inproceedings{lin2023magic3d,

title={Magic3D: High-Resolution Text-to-3D Content Creation},

author={Lin, Chen-Hsuan and Gao, Jun and Tang, Luming and Takikawa, Towaki and Zeng, Xiaohui and Huang, Xun and Kreis, Karsten and Fidler, Sanja and Liu, Ming-Yu and Lin, Tsung-Yi},

booktitle={IEEE Conference on Computer Vision and Pattern Recognition ({CVPR})},

year={2023}

}

title={Magic3D: High-Resolution Text-to-3D Content Creation},

author={Lin, Chen-Hsuan and Gao, Jun and Tang, Luming and Takikawa, Towaki and Zeng, Xiaohui and Huang, Xun and Kreis, Karsten and Fidler, Sanja and Liu, Ming-Yu and Lin, Tsung-Yi},

booktitle={IEEE Conference on Computer Vision and Pattern Recognition ({CVPR})},

year={2023}

}

We generate high-quality 3D textured meshes from text, with support for editing and image-conditioned control. Our two-stage pipeline builds a coarse NeRF via sparse 3D hash structures, then refines to a high-resolution mesh via latent diffusion — achieving 2x faster generation than prior work.

Coverage:

Ars Technica |

Forbes |

MarkTechPost |

Gigazine |

3D Printing Industry

Earlier Works

BARF: Bundle-Adjusting Neural Radiance Fields

ICCV 2021 (oral presentation)—

paper •

project page •

presentation •

code •

coverage •

BibTex

@inproceedings{lin2021barf,

title={BARF: Bundle-Adjusting Neural Radiance Fields},

author={Lin, Chen-Hsuan and Ma, Wei-Chiu and Torralba, Antonio and Lucey, Simon},

booktitle={IEEE International Conference on Computer Vision ({ICCV})},

year={2021}

}

title={BARF: Bundle-Adjusting Neural Radiance Fields},

author={Lin, Chen-Hsuan and Ma, Wei-Chiu and Torralba, Antonio and Lucey, Simon},

booktitle={IEEE International Conference on Computer Vision ({ICCV})},

year={2021}

}

SDF-SRN: Learning Signed Distance 3D Object Reconstruction from Static Images

NeurIPS 2020—

paper •

project page •

code •

BibTex

@inproceedings{lin2020sdfsrn,

title={SDF-SRN: Learning Signed Distance 3D Object Reconstruction from Static Images},

author={Lin, Chen-Hsuan and Wang, Chaoyang and Lucey, Simon},

booktitle={Advances in Neural Information Processing Systems ({NeurIPS})},

year={2020}

}

title={SDF-SRN: Learning Signed Distance 3D Object Reconstruction from Static Images},

author={Lin, Chen-Hsuan and Wang, Chaoyang and Lucey, Simon},

booktitle={Advances in Neural Information Processing Systems ({NeurIPS})},

year={2020}

}

Deep NRSfM++: Towards Unsupervised 2D-3D Lifting in the Wild

Photometric Mesh Optimization for Video-Aligned 3D Object Reconstruction

CVPR 2019—

paper •

project page •

code •

BibTex

@inproceedings{lin2019photometric,

title={Photometric Mesh Optimization for Video-Aligned 3D Object Reconstruction},

author={Lin, Chen-Hsuan and Wang, Oliver and Russell, Bryan C and Shechtman, Eli and Kim, Vladimir G and Fisher, Matthew and Lucey, Simon},

booktitle={IEEE Conference on Computer Vision and Pattern Recognition ({CVPR})},

year={2019}

}

title={Photometric Mesh Optimization for Video-Aligned 3D Object Reconstruction},

author={Lin, Chen-Hsuan and Wang, Oliver and Russell, Bryan C and Shechtman, Eli and Kim, Vladimir G and Fisher, Matthew and Lucey, Simon},

booktitle={IEEE Conference on Computer Vision and Pattern Recognition ({CVPR})},

year={2019}

}

ST-GAN: Spatial Transformer Generative Adversarial Networks for Image Compositing

CVPR 2018—

paper •

project page •

code •

BibTex

@inproceedings{lin2018stgan,

title={ST-GAN: Spatial Transformer Generative Adversarial Networks for Image Compositing},

author={Lin, Chen-Hsuan and Yumer, Ersin and Wang, Oliver and Shechtman, Eli and Lucey, Simon},

booktitle={IEEE Conference on Computer Vision and Pattern Recognition ({CVPR})},

year={2018}

}

title={ST-GAN: Spatial Transformer Generative Adversarial Networks for Image Compositing},

author={Lin, Chen-Hsuan and Yumer, Ersin and Wang, Oliver and Shechtman, Eli and Lucey, Simon},

booktitle={IEEE Conference on Computer Vision and Pattern Recognition ({CVPR})},

year={2018}

}

Deep-LK for Efficient Adaptive Object Tracking

Learning Efficient Point Cloud Generation for Dense 3D Object Reconstruction

AAAI 2018 (oral presentation)—

paper •

project page •

code •

BibTex

@inproceedings{lin2018learning,

title={Learning Efficient Point Cloud Generation for Dense 3D Object Reconstruction},

author={Lin, Chen-Hsuan and Kong, Chen and Lucey, Simon},

booktitle={AAAI Conference on Artificial Intelligence ({AAAI})},

year={2018}

}

title={Learning Efficient Point Cloud Generation for Dense 3D Object Reconstruction},

author={Lin, Chen-Hsuan and Kong, Chen and Lucey, Simon},

booktitle={AAAI Conference on Artificial Intelligence ({AAAI})},

year={2018}

}

Object-Centric Photometric Bundle Adjustment with Deep Shape Prior

WACV 2018—

paper •

extension •

BibTex

@inproceedings{zhu2017object,

title={Object-Centric Photometric Bundle Adjustment with Deep Shape Prior},

author={Zhu, Rui and Wang, Chaoyang and Lin, Chen-Hsuan and Wang, Ziyan and Lucey, Simon},

booktitle={IEEE Winter Conference on Applications of Computer Vision ({WACV})},

year={2018}

}

title={Object-Centric Photometric Bundle Adjustment with Deep Shape Prior},

author={Zhu, Rui and Wang, Chaoyang and Lin, Chen-Hsuan and Wang, Ziyan and Lucey, Simon},

booktitle={IEEE Winter Conference on Applications of Computer Vision ({WACV})},

year={2018}

}

Inverse Compositional Spatial Transformer Networks

CVPR 2017 (oral presentation)—

paper •

project page •

presentation •

code •

BibTex

@inproceedings{lin2017inverse,

title={Inverse Compositional Spatial Transformer Networks},

author={Lin, Chen-Hsuan and Lucey, Simon},

booktitle={IEEE Conference on Computer Vision and Pattern Recognition ({CVPR})},

year={2017}

}

title={Inverse Compositional Spatial Transformer Networks},

author={Lin, Chen-Hsuan and Lucey, Simon},

booktitle={IEEE Conference on Computer Vision and Pattern Recognition ({CVPR})},

year={2017}

}

Using Locally Corresponding CAD Models for Dense 3D Reconstructions from a Single Image

CVPR 2017—

paper •

BibTex

@inproceedings{kong2017using,

title={Using Locally Corresponding CAD Models for Dense 3D Reconstructions from a Single Image},

author={Kong, Chen and Lin, Chen-Hsuan and Lucey, Simon},

booktitle={IEEE Conference on Computer Vision and Pattern Recognition ({CVPR})},

year={2017}

}

title={Using Locally Corresponding CAD Models for Dense 3D Reconstructions from a Single Image},

author={Kong, Chen and Lin, Chen-Hsuan and Lucey, Simon},

booktitle={IEEE Conference on Computer Vision and Pattern Recognition ({CVPR})},

year={2017}

}

The Conditional Lucas & Kanade Algorithm

ECCV 2016—

paper •

project page •

code •

BibTex

@inproceedings{lin2016conditional,

title={The Conditional Lucas \& Kanade Algorithm},

author={Lin, Chen-Hsuan and Zhu, Rui and Lucey, Simon},

booktitle={European Conference on Computer Vision (ECCV)},

pages={793--808},

year={2016},

organization={Springer International Publishing}

}

title={The Conditional Lucas \& Kanade Algorithm},

author={Lin, Chen-Hsuan and Zhu, Rui and Lucey, Simon},

booktitle={European Conference on Computer Vision (ECCV)},

pages={793--808},

year={2016},

organization={Springer International Publishing}

}

Ph.D. Dissertation

Learning 3D Registration and Reconstruction from the Visual World

Carnegie Mellon University, 2021

thesis •

dissertation talk •

BibTex

@phdthesis{lin2021learning,

title={Learning 3D Registration and Reconstruction from the Visual World},

author={Lin, Chen-Hsuan},

year={2021},

month={June},

school={The Robotics Institute, Carnegie Mellon University},

address={Pittsburgh, PA},

number={CMU-RI-TR-21-13},

}

title={Learning 3D Registration and Reconstruction from the Visual World},

author={Lin, Chen-Hsuan},

year={2021},

month={June},

school={The Robotics Institute, Carnegie Mellon University},

address={Pittsburgh, PA},

number={CMU-RI-TR-21-13},

}

Experience

NVIDIA

(2021 – present) Research Scientist & Manager

Carnegie Mellon University

(2014 – 2021) Graduate Research Assistant

Facebook AI Research

(Meta AI, 2019) Research Intern

Adobe Research

(2017, 2018) Research Intern