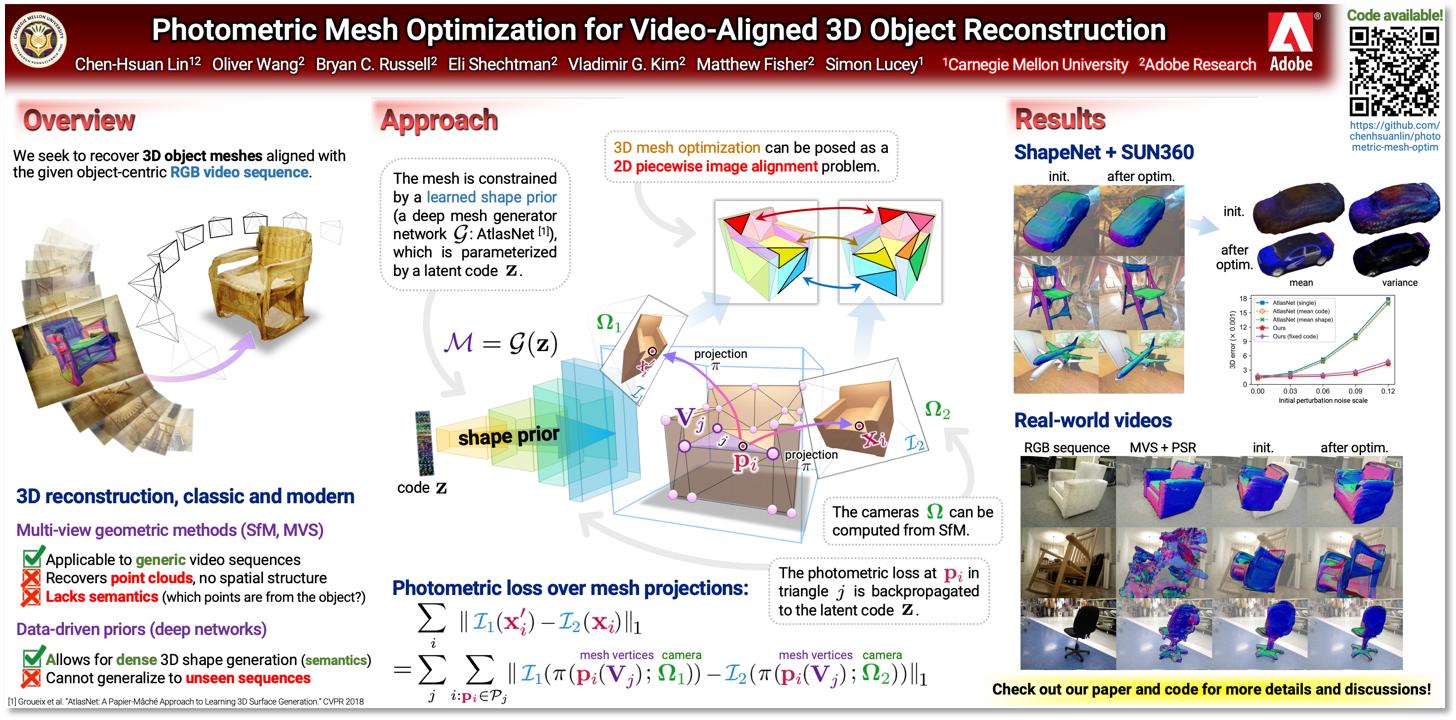

In this paper, we address the problem of 3D object mesh reconstruction from RGB videos. Our approach combines the best of multi-view geometric and data-driven methods for 3D reconstruction by optimizing object meshes for multi-view photometric consistency while constraining mesh deformations with a shape prior. We pose this as a piecewise image alignment problem for each mesh face projection. Our approach allows us to update shape parameters from the photometric error without any depth or mask information. Moreover, we show how to avoid a degeneracy of zero photometric gradients via rasterizing from a virtual viewpoint. We demonstrate 3D object mesh reconstruction results from both synthetic and real-world videos with our photometric mesh optimization, which is unachievable with either naive mesh generation networks or traditional pipelines of surface reconstruction without heavy manual post-processing.

Abstract

Abstract

Video

Video

Code, dataset, and pretrained models

Code, dataset, and pretrained models

The code is hosted on GitHub (PyTorch).

Details to download the datasets and pretrained models are also described in the GitHub page.

Details to download the datasets and pretrained models are also described in the GitHub page.

Publications

Publications

CVPR 2019 paper: https://arxiv.org/abs/1903.08642

BibTex:

@inproceedings{lin2019photometric,

title={Photometric Mesh Optimization for Video-Aligned 3D Object Reconstruction},

author={Lin, Chen-Hsuan and Wang, Oliver and Russell, Bryan C and Shechtman, Eli and Kim, Vladimir G and Fisher, Matthew and Lucey, Simon},

booktitle={IEEE Conference on Computer Vision and Pattern Recognition ({CVPR})},

year={2019}

}

title={Photometric Mesh Optimization for Video-Aligned 3D Object Reconstruction},

author={Lin, Chen-Hsuan and Wang, Oliver and Russell, Bryan C and Shechtman, Eli and Kim, Vladimir G and Fisher, Matthew and Lucey, Simon},

booktitle={IEEE Conference on Computer Vision and Pattern Recognition ({CVPR})},

year={2019}

}